John Z Black

Brunswick, ME • (207) 245-1010 • contact@johnzblack.com

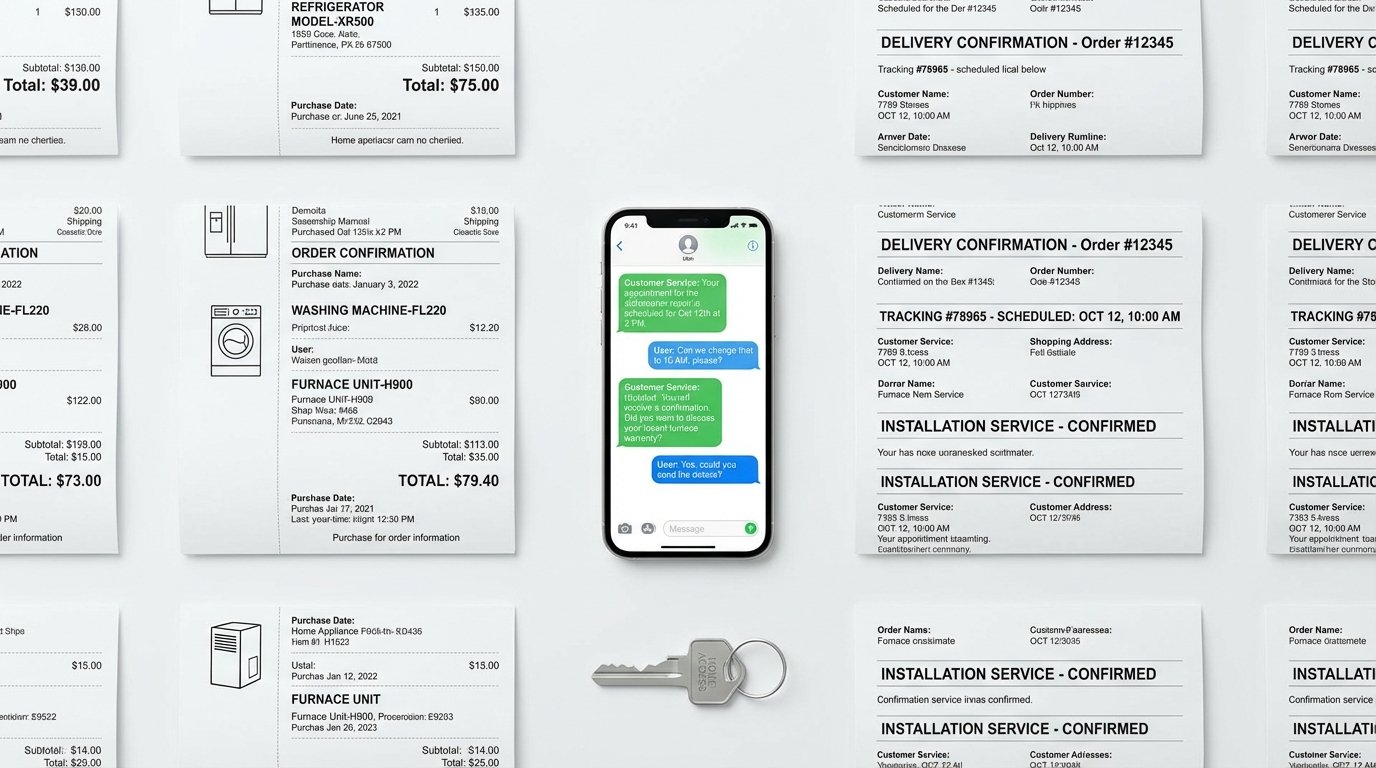

When you called Sears Home Services to schedule an appliance repair, you gave your name, your address, the brand and model of the broken machine, and when you’d be home for the technician. Routine stuff.

Turns out that conversation was sitting in an unsecured database. Along with 3.7 million others.

Security researcher Jeremy Fowler discovered three publicly accessible Sears databases containing 3.7 million customer service chat logs and 1.4 million audio files from the company’s AI chatbot. No login required. No authentication. Just data, readable by anyone who found it.

The records included full names, phone numbers, home addresses, appliance details, and delivery and repair appointment times.

This Is a Physical Security Problem

Most data exposure stories stay in the digital realm. This one doesn’t.

Think about what that combination of data actually tells someone with bad intentions: your full name, your street address, what expensive appliances are in your home, and the exact window of time when you scheduled a technician visit. In other words: when you’ll be home, and what’s worth taking.

That’s not a theoretical risk. That’s a burglar’s shopping list. WIRED’s reporting on the story flagged the combination of address data and scheduling information specifically as the most concerning element. Fowler, who’s found dozens of exposed databases, agreed.

The Bigger Pattern

The Sears story isn’t really just about Sears. It’s about what happens when companies deploy AI chatbots for customer service without thinking about what they’re collecting, how long they’re keeping it, and who can access it.

Every conversation with a customer service AI is a data point. Enough data points and you have a detailed profile: someone’s home, their appliances, their schedule. Collected with no friction, stored by default, secured as an afterthought.

A recent analysis of AI companion apps – the emotional support and chatbot services millions of people use – found the same pattern: intimate conversation histories left exposed or inadequately protected. Users share things with these apps they wouldn’t tell most people. The apps failed to secure the infrastructure around that trust.

What Should Have Happened

None of this is technically difficult to prevent. Collect only what you need for the transaction. Don’t retain it for years after the service is complete. Require authentication to access stored records. Encrypt data at rest.

These aren’t exotic requirements. They’re table stakes. Sears apparently didn’t get any of them right.

There’s no public statement from Sears at time of writing about whether the databases have been secured or whether affected customers have been notified.

If you’ve used Sears Home Services, your conversation may be in those logs. You can’t take it back. What you can do going forward: treat AI customer service systems like the databases they are. They’re not confidential. They’re not private. Share accordingly.