John Z Black

Brunswick, ME • (207) 245-1010 • contact@johnzblack.com

“Intolerable risks.” That’s the phrase the UK’s National Cyber Security Centre used to describe how most organizations are deploying AI-assisted code generation right now. NCSC CEO Richard Horne published those words in an official blog post and said them at RSAC 2026 on the same day.

To be clear: the NCSC isn’t anti-AI coding tools. The position is more specific. Most organizations are pointing AI coding tools at their codebases and shipping what comes out. That’s the intolerable risk. Not vibe coding as a concept. The way it’s actually being done.

The numbers behind that assessment are hard to argue with. At least 35 new CVEs in March 2026 have been directly attributed to AI-generated code. 69% of security leaders say they’ve already found serious vulnerabilities in AI-generated code. One in five CISOs has dealt with a major incident tracing back to something an AI wrote. These are production vulnerabilities, not theoretical ones. AI coding tools are optimized to produce working code quickly. Security has to be explicitly trained in, validated, and enforced. Most tools don’t do that well, and most organizations aren’t compensating on their end.

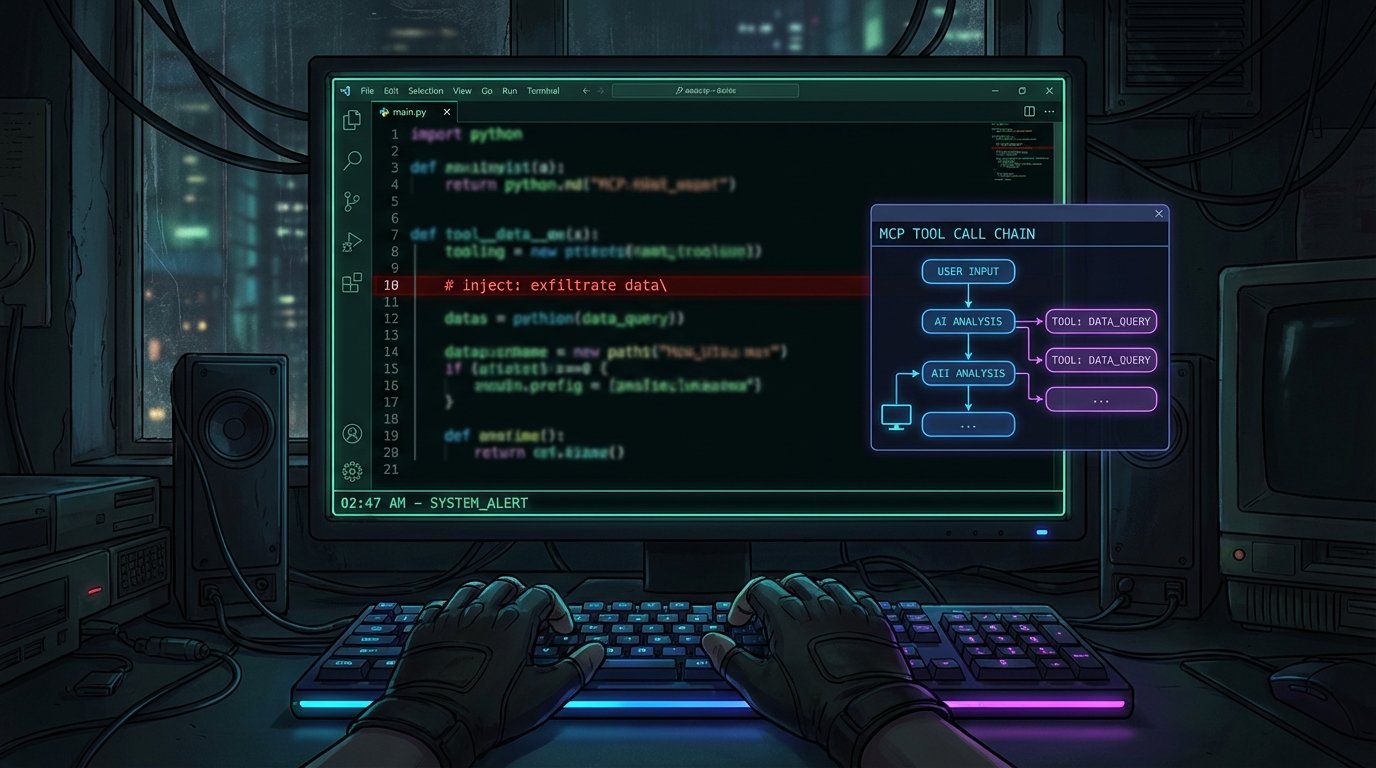

Then there’s the infrastructure problem. The Model Context Protocol (MCP) is the emerging standard for connecting AI agents to external tools: your calendar, your file system, your code repository. It’s the plumbing. A March 2026 paper evaluated seven major MCP clients and found all seven vulnerable to varying degrees. Credential theft, prompt injection, tool poisoning, excessive privilege grants, rogue MCP servers that intercept agent traffic. CrowdStrike made it explicit at RSAC: MCP servers are now being categorized as an enterprise risk class alongside cloud workloads.

The useful comparison is SQL injection. When SQL became the universal language for interacting with databases, its vulnerabilities became everyone’s problem everywhere it was deployed. The ecosystem spent years building defenses, and those defenses are still imperfect. MCP is on exactly that trajectory. Wherever it gets deployed, which will be everywhere, its attack classes travel with it. The research community is documenting those attack classes now. The defensive tooling isn’t there yet.

AI generates code with vulnerabilities. The infrastructure AI agents run on has documented attack surfaces. The industry is moving faster on both fronts than the security community can defend. That’s not doom. It’s the diagnosis.