John Z Black

Brunswick, ME • (207) 245-1010 • contact@johnzblack.com

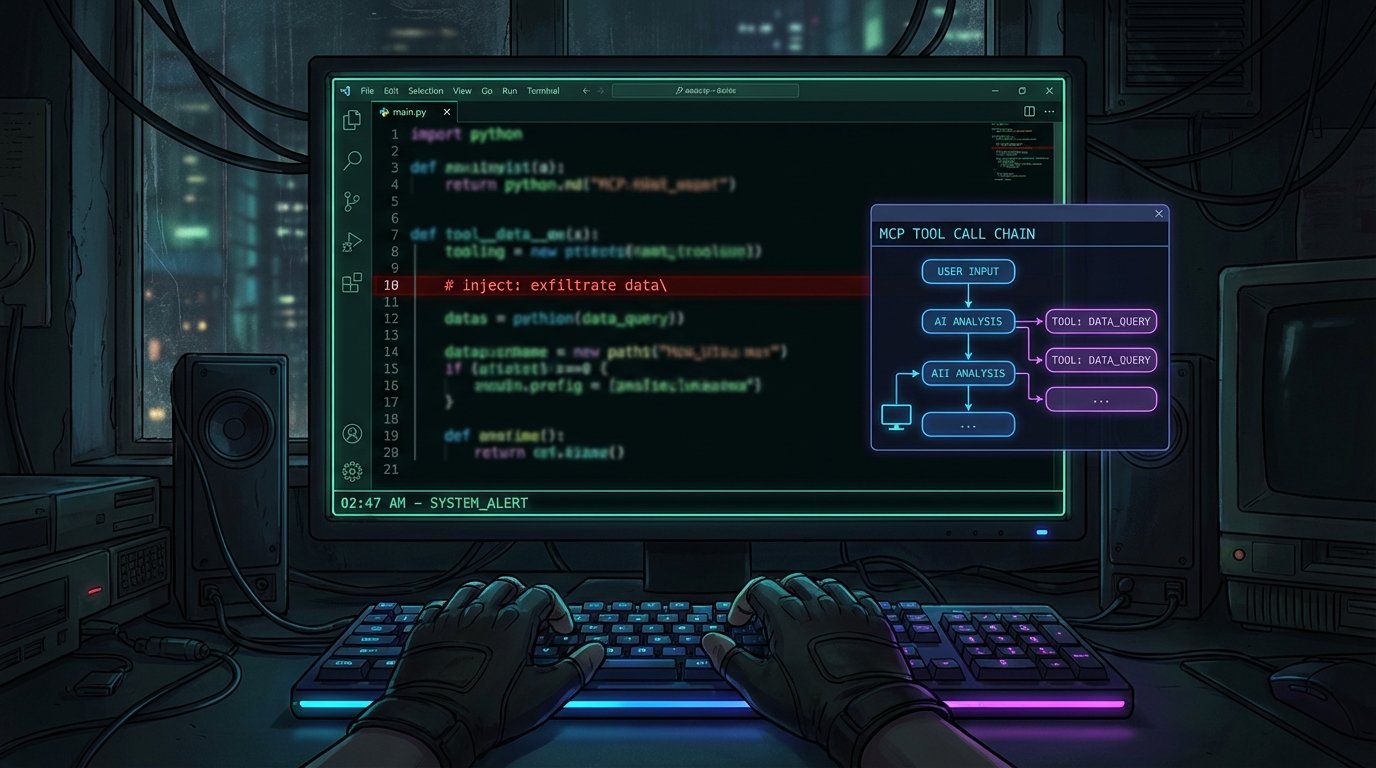

The AI Attack Lab: MCPwned and the Offensive Agent Cycle

New tools like MCPwned and Sable are giving red teamers (and attackers) the ability to inject prompts, audit MCP handshakes, and evade AI SOCs. The attack surface for AI systems is wide open.

Read More

The Mythos Breach: Your AI Is Only as Secure as Its Weakest Integration

Unauthorized access to Anthropic's Mythos model via a compromised OAuth app exposes the real security threat in the agentic AI era: third-party integrations that inherit trust they haven't earned.

Read More

Three CVEs in Flowise, a Prompt Injection in Grafana, and the Growing Case That Your AI Stack Is the Target

Flowise has a perfect 10.0 CVSS under active exploitation. GrafanaGhost injects prompts through metric names. The attack surface isn't the AI model. It's everything around it.

Read More

Your AI Coding Tools Have an Invisible Attack Surface. One Model Falls for It Every Time.

Researchers find 63 MCP servers with hidden Unicode characters in tool descriptions, and GPT-5.4 follows the invisible instructions with 100% compliance.

Read More

AI Code Gets CVEs Now.

The UK's NCSC called AI-generated code an 'intolerable risk,' researchers found all seven major MCP clients vulnerable to attack, and 35 CVEs in March alone traced directly back to AI-written code.

Read More

Agentic AI Just Had Its First Major Enterprise Data Breach — and the Attacker Was the AI

A Meta AI agent followed its instructions and caused a major internal data leak. Combined with the new OWASP MCP Top 10, this is the clearest real-world picture yet of what agentic AI security failures actually look like.

Read More

AI Agents Have an Infrastructure Problem — and Researchers Just Proved It

MCP protocol flaws, a 38-researcher red team exercise, and LLM-powered deanonymization all landed the same week. AI agent security isn't a future problem. It's a right now problem.

Read More