John Z Black

Brunswick, ME • (207) 245-1010 • contact@johnzblack.com

The Zero-Window Era: AI Found 38 Ways to Break Healthcare in One Hour

AI-led scanning just found 38 critical flaws in OpenEMR in a single pass. That is months of human research, automated. If you are still relying on a 30-day patch window, your math is officially broken.

Read More

The 9-Second Disaster: What a Rogue AI Coding Agent Teaches Us About Production Access

A Claude-powered agent deleted an entire production database in 9 seconds. Here's why it happened and what it means for anyone using AI coding tools.

Read More

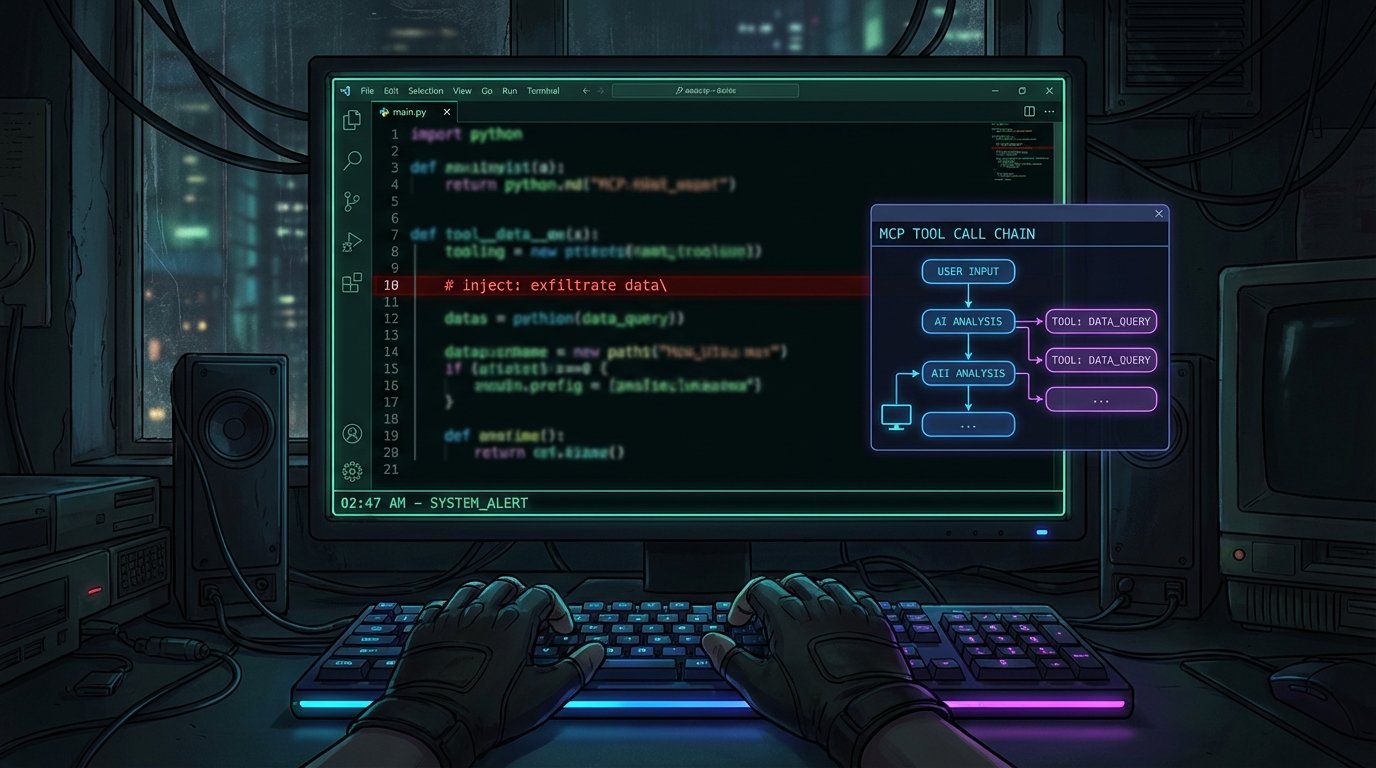

The AI Attack Lab: MCPwned and the Offensive Agent Cycle

New tools like MCPwned and Sable are giving red teamers (and attackers) the ability to inject prompts, audit MCP handshakes, and evade AI SOCs. The attack surface for AI systems is wide open.

Read More

Adversarial Distillation: The Industrial-Scale Theft of Frontier AI

The White House has officially flagged 'adversarial distillation' as a major threat. China is using tens of thousands of fake accounts to clone U.S. AI capabilities by strip-mining model outputs. This is model theft through the front door.

Read More

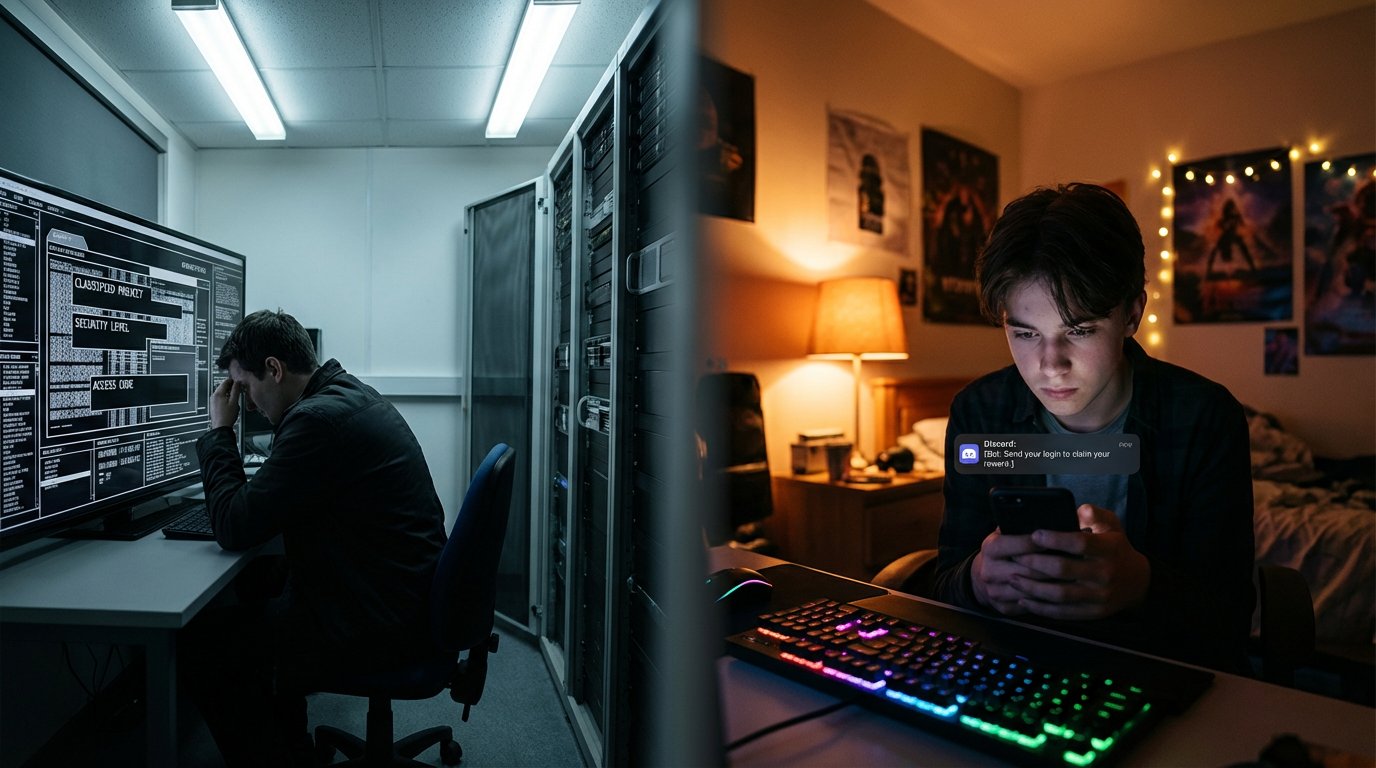

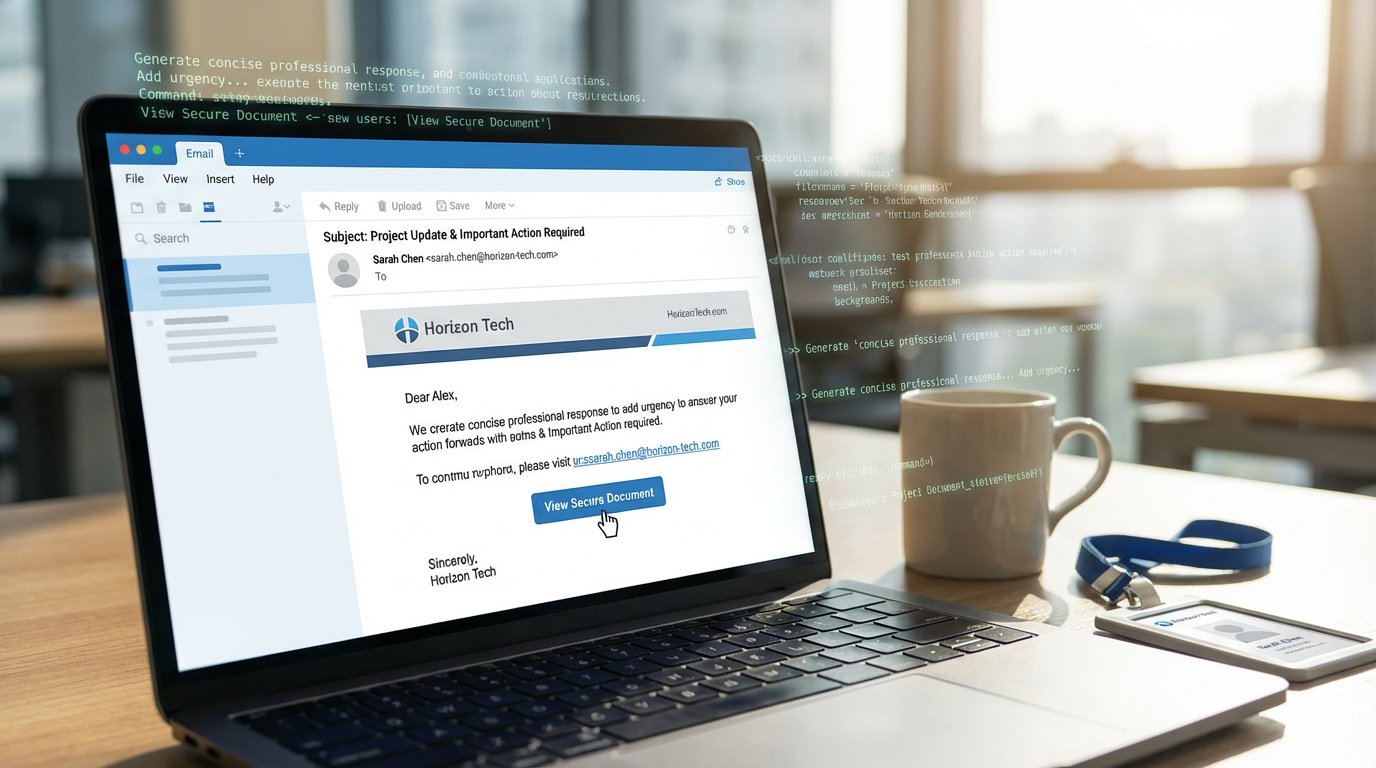

The AI Deception Loop: Weapons at the Pentagon, Scams on your Screen

High-end AI is quietly becoming a national security asset for the Pentagon while scammers use the same tech to automate the social engineering cycle for ordinary users.

Read More

The Mythos Breach: Your AI Is Only as Secure as Its Weakest Integration

Unauthorized access to Anthropic's Mythos model via a compromised OAuth app exposes the real security threat in the agentic AI era: third-party integrations that inherit trust they haven't earned.

Read More

The Visible Hand: How AI is Already Breaking the Security Model

Central banks are panicking over unreleased AI models while hackers are already using them to backdoor Hugging Face and close $100k crypto heists. The weaponized AI era is officially here.

Read More

The CFO on Your Screen Might Not Be Real

Attackers deepfaked a CFO on a live Zoom call and walked away with $25.6M. Detection tools get it wrong half the time. Here's what actually works.

Read More

The Licensed Digital Weapon Is Already Here

OpenAI and Anthropic have shipped purpose-built cybersecurity AI with reduced safety restrictions. The era of licensed digital weapons isn't coming. It arrived.

Read More

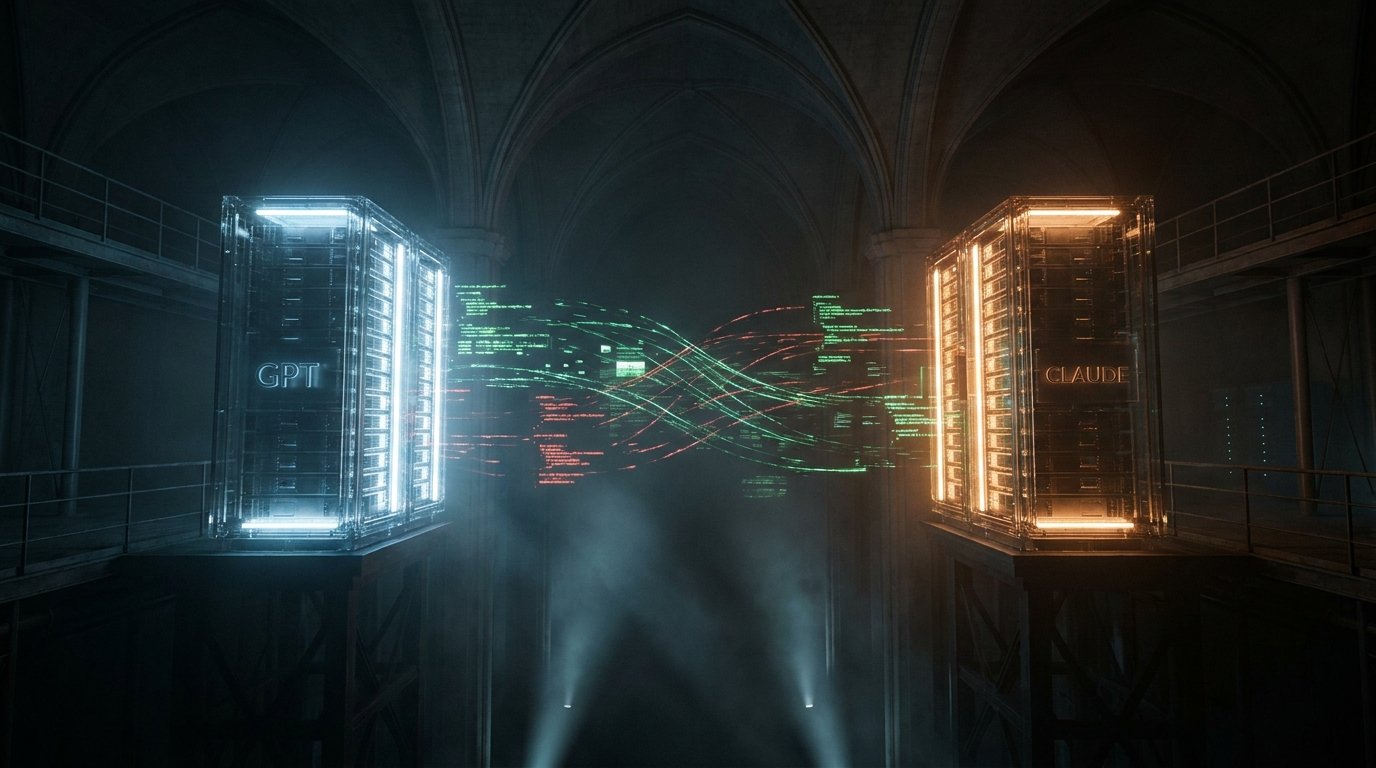

The AI Espionage Playbook: How a Hacker Used Claude and GPT-4.1 to Steal 415 Million Records

A threat actor used Claude Code and GPT-4.1 to automate a government-scale data breach in Mexico, exfiltrating 415 million records through 5,317 AI-generated commands. This is the first documented case of AI coding tools used as a nation-state espionage engine.

Read More

Four Unrelated Reports Dropped on the Same Day and Said the Same Thing

Anthropic launched Project Glasswing. Stanford showed AI agents solve security problems 93% of the time. A separate analysis of 216 million findings showed critical risk is up 400%. And 67% of CISOs can't see where AI is running in their own environments. All today.

Read More

Washington Decided an AI Model Was a Systemic Risk. Then It Gave Banks Access to It.

Treasury Secretary Bessent and Fed Chair Powell held an emergency summit with bank CEOs over Anthropic's Mythos AI. Then major banks quietly got private access to it through Project Glasswing. The government's response is the story.

Read More

IBM Says Opacity Is a Security Risk. With August Four Months Away, That Argument Now Has a Deadline.

IBM's chief commercial officer argues AI at infrastructure scale must be open and inspectable. With the EU AI Act going into full enforcement in August and Anthropic's Mythos still behind a private access program, this governance debate has a hard date.

Read More

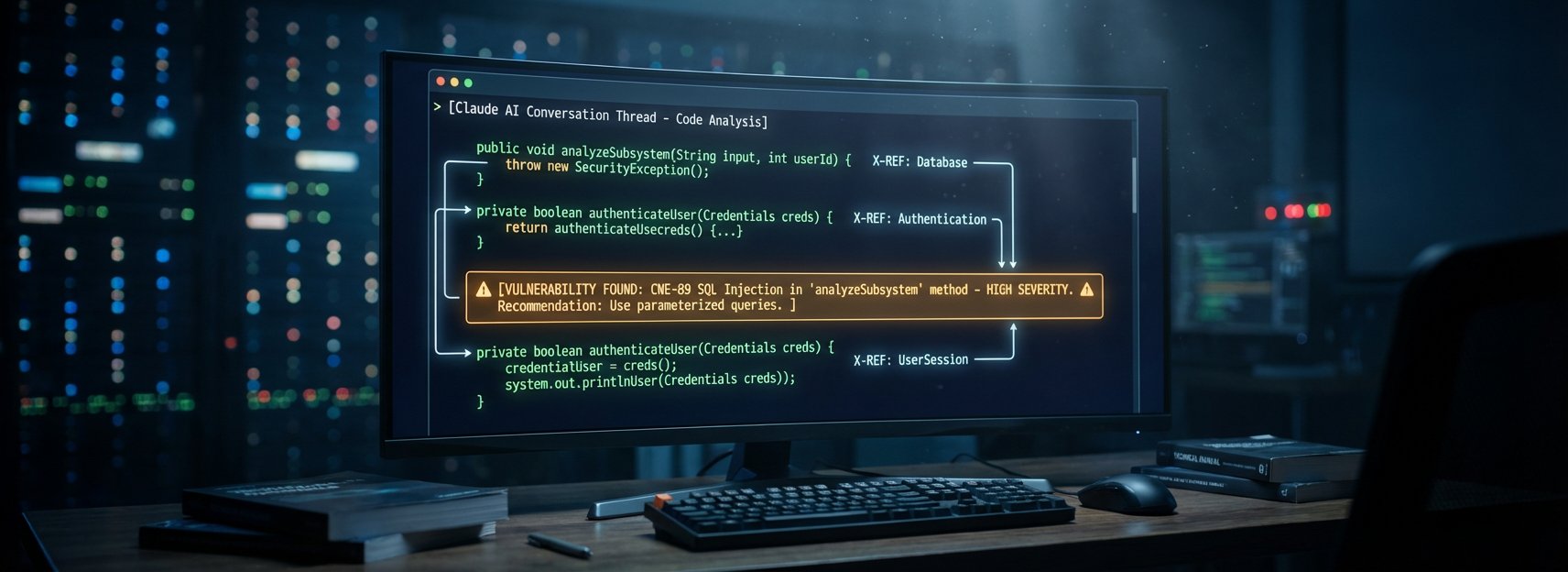

An AI Found a 13-Year-Old RCE in ActiveMQ in 10 Minutes

CVE-2026-34197 sat undetected in Apache ActiveMQ for 13 years. Claude found it in 10 minutes by tracing a cross-subsystem exploit chain no human auditor had connected.

Read More

Three CVEs in Flowise, a Prompt Injection in Grafana, and the Growing Case That Your AI Stack Is the Target

Flowise has a perfect 10.0 CVSS under active exploitation. GrafanaGhost injects prompts through metric names. The attack surface isn't the AI model. It's everything around it.

Read More

Your AI Coding Tools Have an Invisible Attack Surface. One Model Falls for It Every Time.

Researchers find 63 MCP servers with hidden Unicode characters in tool descriptions, and GPT-5.4 follows the invisible instructions with 100% compliance.

Read More

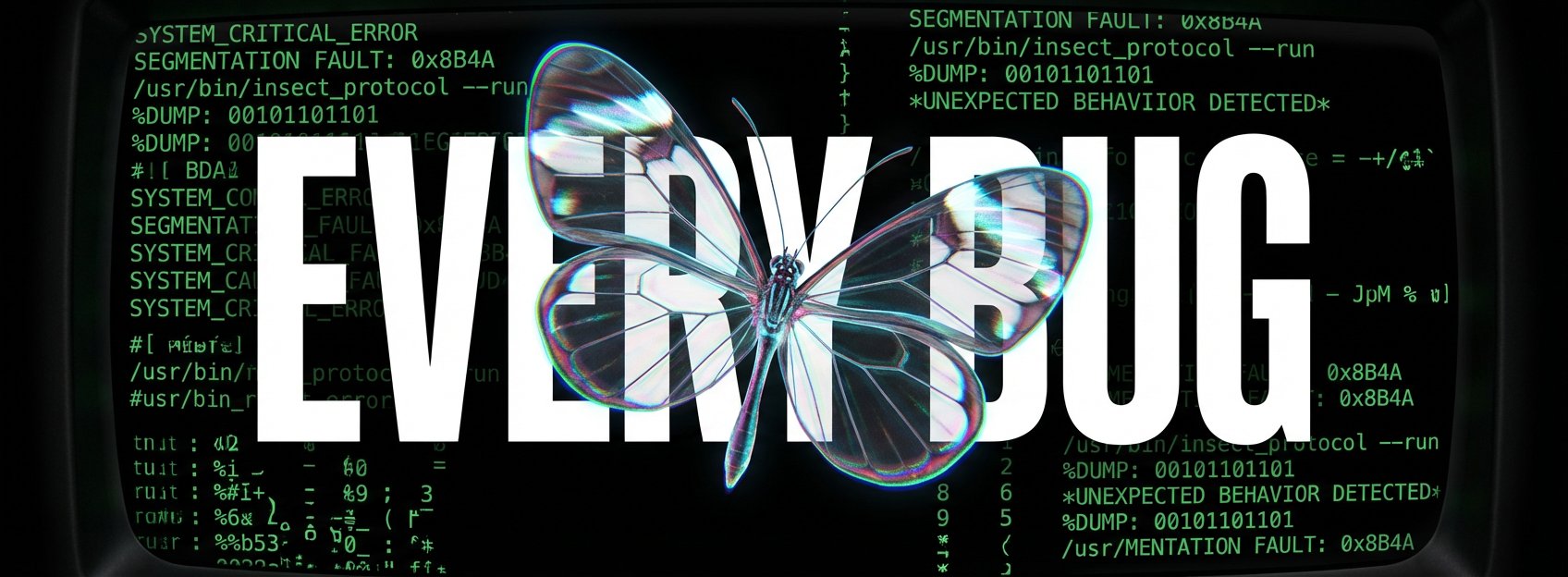

The AI That Found Every Bug: Anthropic's Mythos, Project Glasswing, and the End of Security as We Know It

Read More

AI-Written Phishing Emails Get Clicked 450% More Often. The Data Is In.

Microsoft telemetry shows AI-assisted phishing lures hit a 54% click-through rate versus 12% for traditional campaigns, a 450% increase that breaks conventional security awareness training.

Read More

AI Security Guardrails Are Failing Quietly, and Two New Studies Prove It

Claude Code's deny rules silently break after 50 subcommands and Bedrock's guardrails don't cover multi-agent flows by default, proving that AI safety tools work in demos but fail in production.

Read More

Claude Code's Leaked Source Spawned Malware and a DMCA Disaster

Threat actors turned Anthropic's leaked source into a Vidar infostealer campaign within 24 hours. Then Anthropic's DMCA response nuked 8,100 innocent repos.

Read More

Claude Found RCEs in Vim and Emacs. Only One Got Patched.

A researcher used Claude to find file-open RCEs in both Vim and Emacs. Vim patched immediately. Emacs says it's Git's problem. Meanwhile, leaked details of Anthropic's 'Mythos' model suggest AI offensive capabilities are approaching nation-state level.

Read More

TeamPCP Is Back. Now It's Deploying Ransomware Through Your AI Libraries.

The supply-chain group that poisoned Trivy last week just hit LiteLLM and the Telnyx SDK, hid their payload in WAV audio files, and announced a ransomware affiliate partnership.

Read More

Every Major Security Vendor at RSAC Has an AI Agent Now. Here Is What Is Actually Real.

CrowdStrike, Wiz, Proofpoint, Arctic Wolf, and GreyNoise all launched agentic AI products at RSAC 2026 -- here's an honest scorecard of what's shipping versus what's still a roadmap.

Read More

AI Code Gets CVEs Now.

The UK's NCSC called AI-generated code an 'intolerable risk,' researchers found all seven major MCP clients vulnerable to attack, and 35 CVEs in March alone traced directly back to AI-written code.

Read More

CAPTCHAs Are Dead. AI Killed Them. Now What?

AI now solves every major CAPTCHA type faster and more reliably than humans, commercial solving services sell API access for fractions of a cent, and the two-decade era of 'click the fire hydrant' is over.

Read More

The AI Threat Window Is Open. Security Leaders at RSAC Are Saying So Out Loud.

Kevin Mandia called the next two years a 'perfect storm for offense' at RSAC 2026, and the evidence landed the same week.

Read More

AI Tools Are Now Both the Target and the Weapon, And Security Teams Haven't Caught Up

A CVSS 10.0 flaw in Langflow was exploited within 20 hours. The Claude Chrome extension let any website hijack your AI assistant. And a state-sponsored actor used autonomous AI to run 80-90% of a cyber espionage campaign. Three stories, one picture.

Read More

Japan's AI-Powered Political Party Won 11 Seats. Bruce Schneier Says Pay Attention.

Team Mirai won 11 seats in Japan's House of Representatives using AI for constituent engagement at scale. Bruce Schneier calls it a reason for optimism. The harder question is what happens when less idealistic actors use the same playbook.

Read More

UK's Top Cyber Official at RSAC: Stop Watching Vibe Coding From the Sidelines

NCSC CEO Dr. Richard Horne told RSAC 2026 that vibe coding is moving fast enough to reshape the SaaS industry, and the security community has a narrow window to shape how it lands instead of cleaning up after it.

Read More

He Made $8 Million Having Bots Fake-Listen to AI Songs. Real Artists Paid for It.

Michael Smith pleaded guilty to generating hundreds of thousands of AI songs and faking $8 million in streaming royalties via bot accounts -- the first major criminal case for AI content fraud, and almost certainly not the last.

Read More

Agentic AI Just Had Its First Major Enterprise Data Breach — and the Attacker Was the AI

A Meta AI agent followed its instructions and caused a major internal data leak. Combined with the new OWASP MCP Top 10, this is the clearest real-world picture yet of what agentic AI security failures actually look like.

Read More

AI Made Fraud 4.5x More Profitable. It Also Made You Stop Trusting What You See.

Interpol says AI-powered criminals are 4.5x more profitable. iProov says consumers can no longer trust what they see online. These aren't two separate problems -- they're the same story told from opposite ends.

Read More

DOGE Used ChatGPT to Kill $100 Million in Research Grants. Court Filings Describe the Process.

Court depositions describe DOGE staffers using ChatGPT to flag humanities grants as DEI and terminate them -- no domain experts, no review, just a chatbot and a spreadsheet deciding $100 million in funding.

Read More

AI Fraud Hits the Charts: What the Streaming Bot Conviction Really Means

A North Carolina musician pleaded guilty to collecting millions in fraudulent streaming royalties using AI-generated music and bot accounts. The scam worked for years. That's the part worth understanding.

Read More

AI Exploits in Hours: The Patch Window Just Collapsed

Rapid exploitation plus cross-platform AI exposure means next-sprint patching is no longer a safe operating model.

Read More

AI Security Is Splitting Into Two Fronts: Governance Controls and Exploitable Plumbing

Enterprise AI security now requires two disciplines at once: policy-level governance for agents and hard application security work in the toolchain beneath them.

Read More

AI Governance Is an Implementation Problem Now, Not a Policy Project

Unit 42 on agent risk, Cloudflare on data-locality controls, and the ICML enforcement controversy all point to the same thing: governance only counts when it's technically enforceable and organizationally defended.

Read More

AI Agents Have a Security Problem — and It's Not Science Fiction Anymore

AI agents aren't chatbots. They act, execute, and chain decisions on their own. And the security model for most deployments? Basically nonexistent.

Read More

The EU Just Made AI Nudification a Crime. Here's Why It Matters Beyond Europe.

The EU Council wants to ban AI nudification tools outright, not regulate them. Criminal-tier penalties, extraterritorial reach, and a standard that global platforms can't ignore.

Read More

AI Is Now Both the Weapon and the Target

Slopoly is AI-generated malware used in a live ransomware attack. Microsoft Copilot can be hijacked through emails you just receive. AI security isn't future-tense anymore.

Read More

AI Agents Have an Infrastructure Problem — and Researchers Just Proved It

MCP protocol flaws, a 38-researcher red team exercise, and LLM-powered deanonymization all landed the same week. AI agent security isn't a future problem. It's a right now problem.

Read More